25 Feb 2026 Understanding Rate Limiting and Request Throttling In Web APIs

As developers, we know that systems often fail not because the design is poor, but because demand spikes beyond what the platform can safely handle. A product launch goes viral, a new partner starts integrating faster than expected, or a mobile app suddenly gets featured. Within minutes, request volumes can surge beyond planned capacity.

Modern businesses depend on APIs: they connect internal services, mobile apps, analytics tools, and partner systems. When an API slows down or stops responding, the impact is immediate, leading to failed transactions, broken customer experiences, and a flood of support tickets.

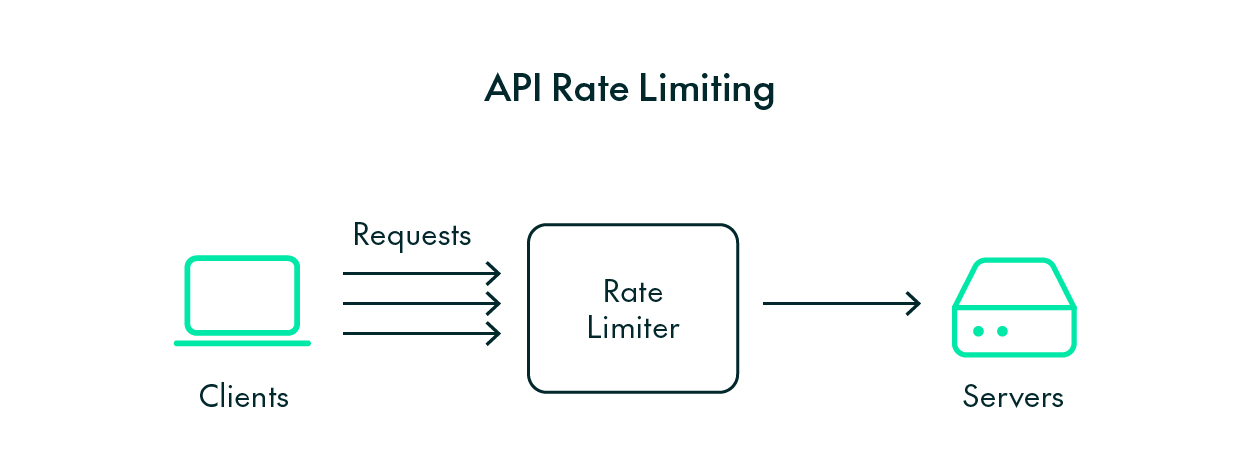

That’s why we build protective mechanisms that keep services stable under pressure, and two of the most effective are rate limiting and request throttling. They might sound purely technical, but their value is business-critical, safeguarding reliability, user experience, and brand trust.

Rate Limiting and Request Throttling Explained

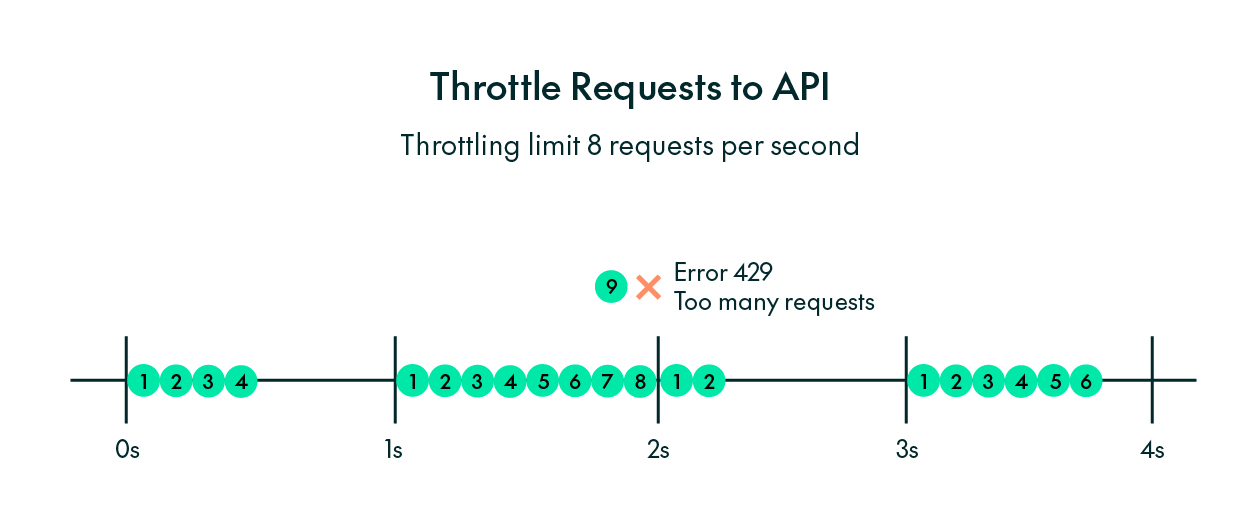

At a basic level, rate limiting sets a boundary on how many requests a client can send within a specific time frame. For example, an API might allow eight requests per second per user, and if a client exceeds that, the server starts rejecting new requests until the window resets.

You’ve probably seen this happening without realising it: when an application suddenly stops updating or returns a message like HTTP 429 Too Many Requests, that’s rate limiting at work. It’s the server’s way of saying, «Please slow down: I need a moment to process what I already have!»

From a business perspective, this message isn’t an error so much as a sign of a healthy, well-protected system. Instead of crashing, the API clearly signals that it’s temporarily overloaded and prompts clients to retry later.

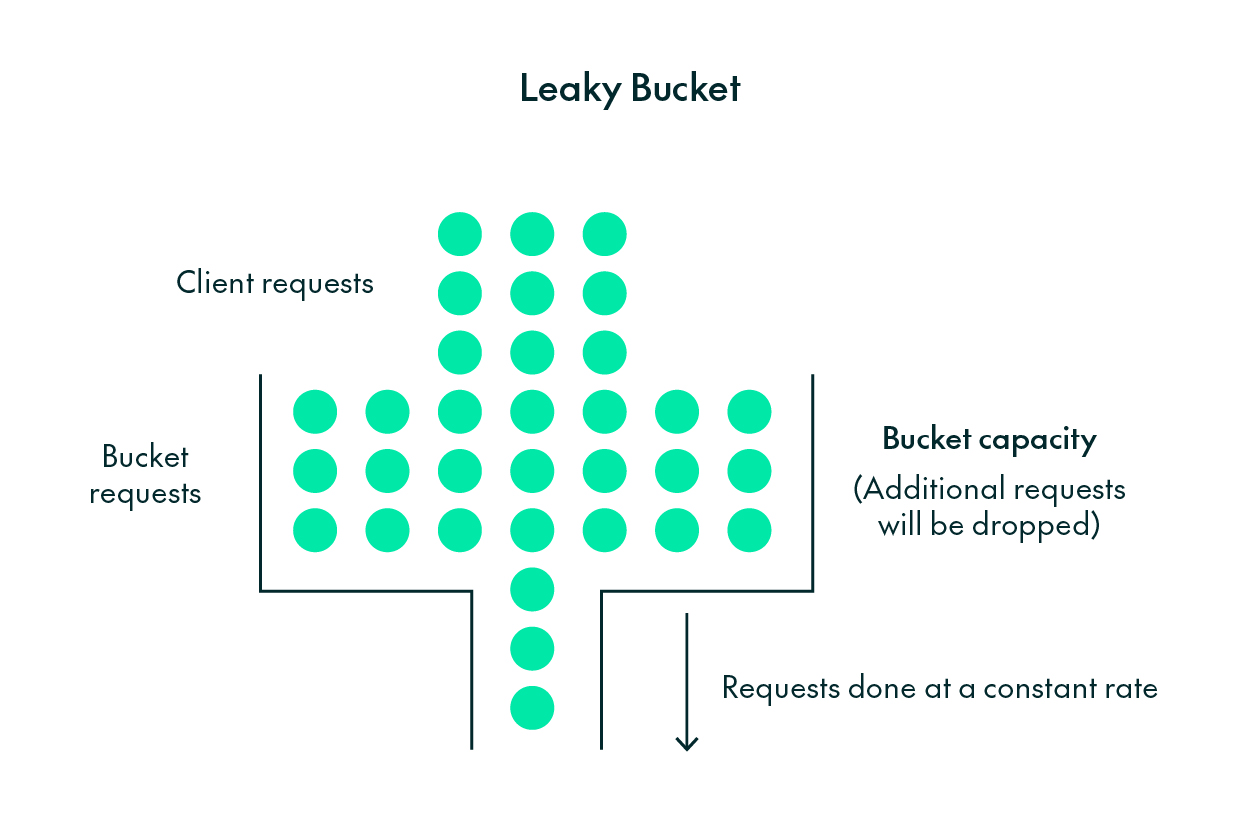

Throttling, in contrast, doesn’t reject requests, but slows them down. Imagine a traffic light at a busy intersection: when traffic is heavy, it manages the flow to prevent gridlock. In web systems, throttling works in the same way. It lets some requests through immediately whilst delaying others, ensuring the service remains responsive.

Under the hood, these mechanisms often rely on simple but effective algorithms like Token Bucket or Leaky Bucket. They manage tokens that represent available request capacity. Each request consumes tokens, and when the tokens run out, clients must wait until new ones become available. The maths behind it is elegant, but the aim is simple – keep traffic fair, balanced, and predictable.

Why They Matter for Business Stability

For businesses, uptime is everything. Every second a service is down, users lose trust and transactions stop. Rate limiting and throttling help to prevent those situations by keeping systems within their safe operating limits.

When load spikes, for example during a marketing campaign or a data synchronisation job, these mechanisms allow the system to degrade gracefully. Instead of collapsing completely, it slows certain operations whilst keeping the rest available, and it’s exactly this type of controlled behaviour that separates resilient platforms from fragile ones.

Technically, these limits also safeguard shared resources like databases, message queues, and third-party APIs. Without these guardrails in place, a single client or internal process could overwhelm the service, affecting everyone. Rate limiting supports fair access and more predictable performance across users and workloads.

From a broader perspective, rate limiting and throttling work alongside caching, asynchronous queues, and observability tools: caching reduces repeated work, queues spread tasks over time, and observability enables teams to detect and respond before incidents escalate. Together, these practices form a safety net that allows businesses to grow without breaking their systems.

What Happens on the Client Side

When a client application hits a rate limit, the experience depends on how the system is designed. Most APIs respond with HTTP 429 Too Many Requests. Better-designed APIs also include a Retry-After header, which tells the client exactly how long to wait before trying again.

Well-built client software uses that signal intelligently: instead of hammering the server with repeated retries, it waits, backs off, or temporarily switches to cached data. This approach is known as exponential backoff, where the client increases the delay after each failed attempt.

For the end user, the result is usually a brief pause or a message such as “Please wait a few seconds,” whilst the app itself remains usable. From a business perspective, this is ideal: the user experience stays smooth and the platform stays stable.

Real-World Scenarios

Consider an e-commerce platform preparing for Black Friday. Thousands of users hit the site at once, browsing products, adding items to their shopping baskets, then checking out. Without request controls, the API could quickly overload and bring the entire shopping experience to a grinding halt. Rate limiting caps request spikes, so every customer has a fair chance to complete a purchase.

Now imagine a ticketing system for a major event. When sales open, millions of fans rush in at the same time. Throttling smooths the surge by ensuring requests are processed in batches, preventing the servers from going down whilst keeping the queue moving.

This isn’t just limited to consumer products: internal analytics platforms, data pipelines, and B2B integrations all rely on steady request flow. When one system floods another with data, throttling keeps communication balanced, avoiding failures that can ripple across teams, departments, or partners.

How Developers and Business Teams Work Together

When business leaders ask, “What happens if our traffic suddenly doubles?”, developers are already a few steps ahead. We don’t just hope the system will cope, we design it to cope.

We can explain what safeguards are in place: which limits are configured, how usage is monitored, and how the system behaves when limits are reached. We can also simulate high-load scenarios, share performance graphs, and demonstrate how throttling keeps the experience consistent under stress.

In practice, this collaboration builds confidence. Businesses understand the trade-offs, including the fact that stability sometimes means delaying a few requests. Engineering, in turn, can align decisions with priorities such as uptime, cost efficiency, and customer experience.

Ultimately, this helps both sides to speak the same language. For developers, reliability is the product; for the business, it’s what earns trust.

Conclusion: Stability Is a Business Feature

When APIs are reliable, products feel effortless. Customers don’t notice rate limiting or throttling; they just experience fast, consistent service. And that’s exactly the point!

These mechanisms don’t limit growth, they enable it. They let businesses scale safely, handle unpredictable spikes, and deliver the reliability users expect.

In the end, rate limiting and throttling aren’t just technical safeguards: they’re the background guarantees that, even under pressure, keep systems online, users connected, and the business moving forward.

If you’re planning to scale an API platform, or are already seeing traffic spikes, ClearPeaks can support you with capacity planning, rate-limiting policy design, load testing, and observability, so that your services remain stable as your business grows. Get in touch with our expert team today!