12 Mar 2026 Cargowise ERP Data Ingestion

CargoWise is a comprehensive end-to-end ERP platform for the logistics, freight forwarding, and supply chain industries. It integrates core operational, financial, and compliance processes into a single system, so companies don’t have to work with multiple disconnected tools.

What CargoWise is used for:

- Freight forwarding operations (air, ocean, and road)

- Customs and compliance management

- Warehouse and transport management

- Accounting and financial control

- Billing, invoicing, and cost management

- Shipment tracking and visibility

- Document management and automation

Why companies use CargoWise:

- It centralises data across departments and geographies

- It automates logistics workflows

- It supports regulatory compliance across markets

- It improves operational efficiency, accuracy, and visibility

- It enables multi-currency, multi-language, and multi-company capabilities for global operations

In short, CargoWise is often chosen as the main operational system for logistics companies, providing control and visibility across the supply chain.

Cargowise as a Data Source

Here at ClearPeaks we’ve worked with CargoWise as a data source. It runs on a highly transactional relational database designed to support real-time, day-to-day logistics operations.

This means the database is optimised for:

- High volumes of transactions

- Frequent reads and writes

- Data consistency and integrity

- Real-time operational processes

The CargoWise transactional database stores, for example:

- Shipments

- Transport orders

- Customs declarations

- Warehouse records

- Costs, rates, and invoicing

- Logistics events and statuses

- Operational documents

- Master data (customers, vendors, routes, etc.)

Every operational action (creating a shipment, issuing an invoice, updating an event) generates transactions in this database.

CargoWise is not a data warehouse by design. It provides operational and financial reports that users can extract from the application, but these are based on its OLTP model rather than a dimensional model.

What’s more, business users can access CargoWise at a company level; it is not designed to support reporting across a region or globally. Effectively, this means users can generate reports for a specific company, but not consolidated reporting across multiple companies, such as a region (for example, Europe) or a global view.

To enable more advanced analytics, we designed a BI solution that extracts data from the transactional database and loads it into an external data warehouse on the Azure data platform. We created a mirror of the transactional database in Azure SQL Database, which we then used as the source for loading data into the CargoWise dimensional model. The reasons for creating the mirror are explained later.

Our solution was implemented on the Microsoft stack using Azure Synapse, with an SQL database as the data warehouse:

Figure 1: Solution architecture to ingest data from CW

The Cost of CargoWise Data Extraction

Querying the CargoWise transactional database incurs a cost, so every query contributes to the overall bill. In our solution, we created a mirror so we could query the data without repeatedly hitting the source system.

Metadata-Driven Ingestion

Another challenge was to develop a data ingestion strategy that was agile and fast, given that we had to ingest hundreds of tables. Building separate data integration packages or pipelines for each table would have been a nightmare, so we chose to implement a metadata-driven ingestion strategy in Azure Synapse.

For more detail on the approach, take a look at our blog post Metadata-driven strategy, where we explain that implementing this method requires a control table that lists the tables to be ingested and the parameters needed to ingest them, such as the load behaviour (full or incremental). Instead of creating one pipeline per table, we implemented a single parameterised pipeline that reads its configuration from a control table we called MainControlTable.

Once this solution was in place, we only had to maintain MainControlTable to add new tables, or to activate/deactivate tables in the ingestion process. We didn’t need to modify the ETL pipeline itself.

It’s important to understand what each column in MainControlTable needs to contain:

- Id: Unique identifier for the control table record.

Example:

- SourceObjectSettings: Defines which object is ingested from the source system (for example, a table or view including schema, a custom SQL query, or a filename or path).

Example:

- SourceConnectionSettingsName: Logical name for the source connection configuration (for example, a linked service, credentials, or a source type).

- CopySourceSettings: Defines how data is read from the source (for example, an SQL reader query, or partitioning).

Example:

- SinkObjectSettings: Defines the target object for the ingestion (for example, a target table including schema, or a data lake path).

Example:

- SinkConnectionSettingsName: Logical name for the target connection configuration (for example, a sink linked service, credentials, or a storage type).

- CopySinkSettings: Defines how data is written to the target (for example, write behaviour such as insert/upsert, or pre-copy scripts such as truncate/delete).

Example:

- CopyActivitySettings: General Copy Activity configuration. Field mapping between the source and sink is defined here.

Example:

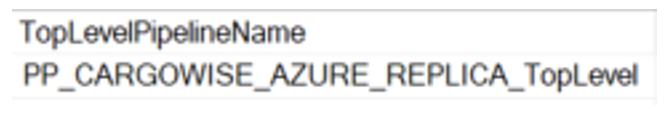

- TopLevelPipelineName: Name of the main orchestration pipeline.

Example:

- TriggerName: Name of the trigger associated with the ingestion run.

Example:

- DataLoadingBehaviorSettings: Defines the data loading strategy (full, delta, CDC – Change Data Capture).

Example:

- TaskId: Logical identifier of the task within the ingestion framework; useful when a pipeline processes multiple ingestion tasks.

- CopyEnabled: Flag indicating whether the ingestion is enabled (1) or disabled (0).

- WatermarkColumnName: Name of the column used for incremental loading (for example, LastModifiedDate or CreatedDate).

Example:

- WatermarkColumnStartValue: Initial value of the watermark; defines the starting point for the first incremental load.

Example:

Data Ingestion Strategy

As we mentioned at the beginning of this post, an optimal ingestion strategy is essential for minimising costs. We used three different load strategies, depending on table size:

- Full Load

- Delta Load

- CDC

Both delta and CDC are incremental, with the difference being whether the table includes a last modified date field. If the table didn’t have this field, we used the audit table (CDC), where inserts, updates (old and new values) and deletes are recorded for each primary key.

After analysis, we realised that it was worth doing a full load for tables with fewer than 5,000 rows. Based on table size, we then decided which strategy to follow.

The technical approach for each strategy is described below:

Full Load

In the full load strategy, tables are fully refreshed on each run. The target table is truncated and then reloaded from the source using a Copy activity, where the mapping is defined in the CopyActivitySettings field in MainControlTable.

Figure 2: Full load strategy schema

Delta Load

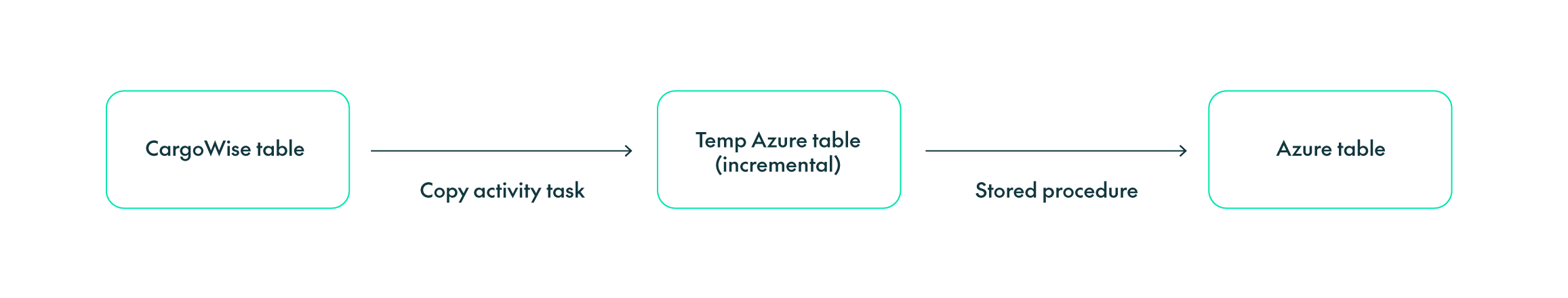

In the delta load strategy, tables are refreshed incrementally. We first load the incremental data into a temporary table (truncate and insert), then merge it into the final mirror table using an upsert (a MERGE statement in SQL Server) with a stored procedure.

To extract changes since the previous run, the process uses the column defined in WatermarkColumnName in MainControlTable, together with the watermark value stored there. Field mapping is taken from CopyActivitySettings, and the stored procedure name is defined in CopySinkSettings.

Figure 3: Delta load strategy schema

CDC

For the CDC strategy, the source differs from the other two. In this case, we get the information from the CargoWise audit database, where row-level operations (insert, delete, update) are stored. These tables include an operation field to distinguish the different operations: 1 = delete, 2 = insert, 3 = update old value, 4 = update new value.

First, we load the audit table incrementally into a temporary table. The main complexity here is that multiple audit rows can exist for the same primary key. For example, a record can be created and then modified shortly afterwards, or modified several times since the last ingestion run. Bearing this in mind, we had to make sure that we loaded the most recent row for each primary key in the mirror table. This is done in a stored procedure, and the example below shows how we defined it:

Figure 4: Example of the stored procedure that loads data from temp table to mirror table

- First, keep only the latest operation for each primary key (delete earlier rows for the same key).

- Then delete rows from the mirror table where the primary key exists in the temporary table with operation number 1, 2, or 4.

- Finally, load the rows that have been inserted or updated.

The process uses WatermarkColumnName in MainControlTable as the watermark column, along with CopyActivitySettings for the mapping and CopySinkSettings for the stored procedure name. This load strategy can be summarised in the following schema:

Figure 5: CDC load strategy schema

Conclusions

CargoWise is a logistics ERP backed by a transactional database that can be used as a data source for analytical models. However, querying this database incurs a cost, so the amount of data extracted has a direct impact on the overall bill. To keep consumption under control, it’s important to define an optimal ingestion strategy, whilst remaining fast and maintainable.

By creating a mirror of the transactional database on Azure and using a metadata-driven ingestion strategy, we were able to build analytical models efficiently and keep monthly costs under control.

If you’d like support with CargoWise ingestion, ClearPeaks can help you to assess your current extraction patterns, select the right loading strategy, and implement the solution you need. Don’t hesitate to get in touch with our expert team!