08 Abr 2026 Model Context Protocol vs. Function Calling: A Practical Comparison for AI Agents

The ability of Large Language Models (LLMs) to interact with external systems is what really separates a simple chatbot from a genuinely useful autonomous agent. One of the latest attempts to standardise this interaction is the Model Context Protocol (MCP).

This blog post explores how far MCP can be pushed in practice and finds that, whilst a standardised protocol offers clear benefits, it can also introduce unexpected efficiency costs.

The Starting Point: What Exactly is MCP?

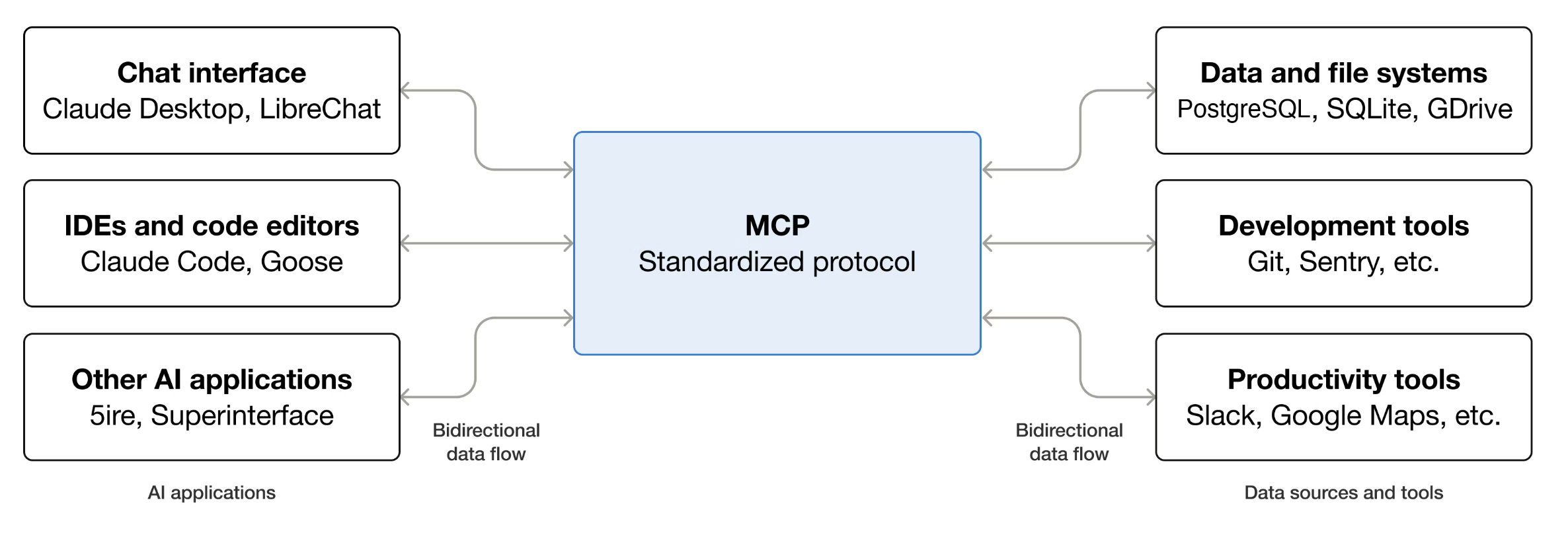

MCP is an open-source standard designed to connect LLMs with external systems. Although LLMs are highly capable, they often operate without direct access to live information or external systems, relying instead on their training data. MCP addresses this limitation by providing a standardised way for AI agents to interact with external services and data sources in real time.

Through MCP, we can provide AI agents with specific context to perform a variety of tasks, such as:

- Making API requests to external services (like Slack or Google Drive).

- Querying databases to retrieve user-specific information.

- Creating files locally to automate documentation or coding workflows.

MCP moves us away from static chatbots to agents capable of executing tasks by interacting with a structured set of tools.

Source: modelcontextprotocol.io

The Research Context: Project Evolution

To evaluate the protocol in practice, our internal project progressed through several development stages:

- Exploration: Investigating official servers with tools that ranged from SMTP emailers to GitHub-based version control processes.

- Creation: Building a custom Python-based MCP server to test basic tool implementation.

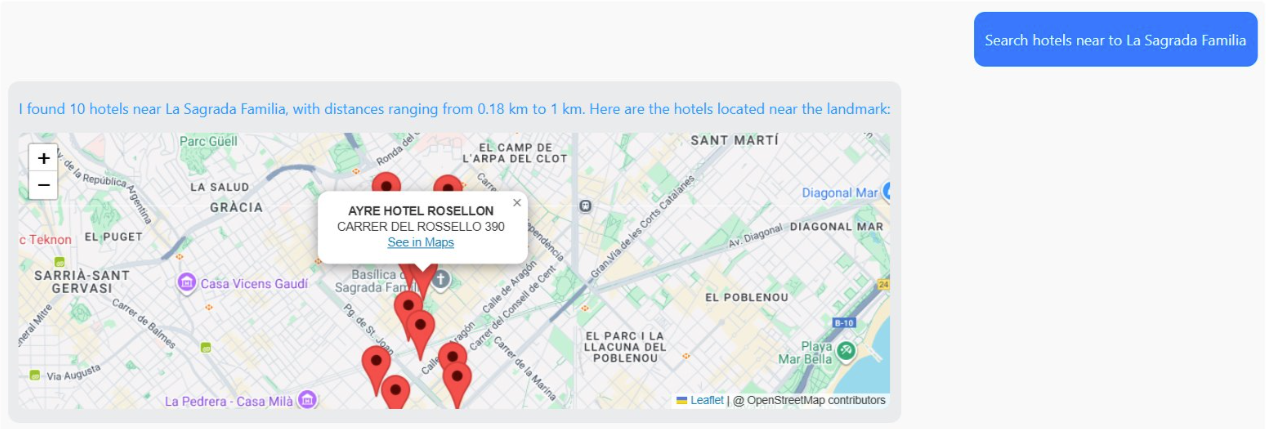

- Complex Implementation: Developing a more complex project with several layers (frontend, backend, and database) – a travel chatbot using N8N, Apache ECharts, and the Amadeus for Developers API, which provided endpoints allowing the chatbot to retrieve information about airports, available flights, and nearby activities or attractions, making it possible to build an assistant to support travel planning tasks.

One of the chatbot features is the ability to search for hotels near a specific place and display them on a map.

Whilst the chatbot was functional, metrics such as latency and token usage were higher than expected. These performance issues raised an important question: is MCP the most efficient way to build a chatbot of this kind?

As a result, the project’s focus shifted to a direct comparison with a more traditional integration approach: Function Calling.

MCP vs. Function Calling (Non-MCP)

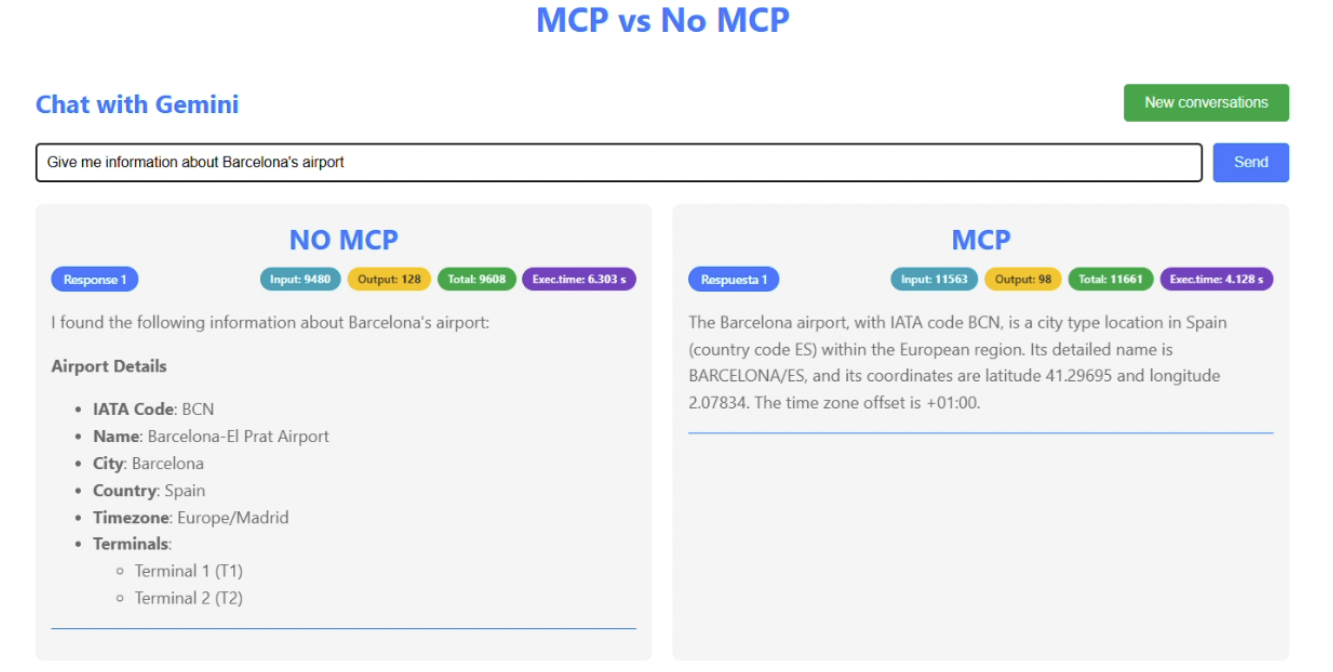

To determine whether the standardised MCP approach was worth the overhead, an equivalent version of the chatbot was built using Function Calling instead. In this version, Function Calling handled the same tool executions as managed by the MCP server, including searching for flights and checking nearby hotels. This chatbot version was also designed to send the same message to both MCP and non-MCP workflows simultaneously and to display their performance metrics on screen.

Frontend view of the comparison between MCP and non-MCP

Both versions were then subjected to a stress test: a typical travel-planning conversation consisting of eight turns (16 messages in total) was simulated, designed to exercise every available tool. The results pointed to a significant performance overhead in the current MCP implementation:

Performance Comparison (8-Turn Dialogue)

| Metric | Model Context Protocol (MCP) | Function Calling (Non-MCP) | Difference |

| Total Tokens | 151,837 | 85,603 | +77% Cost |

| Avg. Response Time | 16.99 seconds | 12.78 seconds | ~25% Slower |

Why Does This Happen?

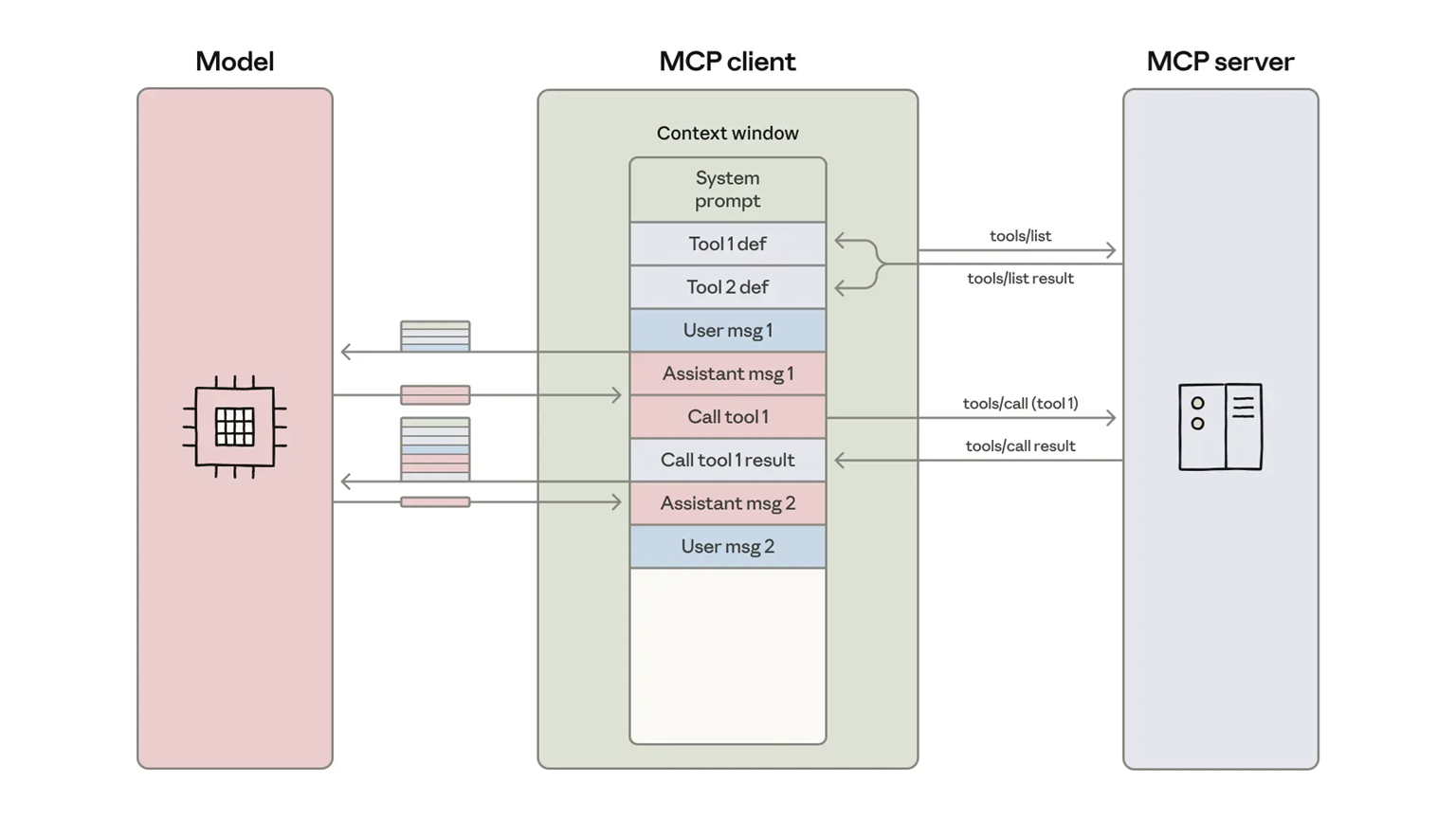

The performance gap stems from how each system introduces tools to the AI model:

- MCP (the universal standard): MCP favours modularity. A server is built once, and any compatible client can use it. However, it creates a load-all behaviour, where the model is presented with all the tools available on the MCP server when only one is required.

- Function Calling (the direct approach): Specific tools are defined directly within the application logic. It provides the model with only the schemas needed for the current task, reducing the risk of confusion with other tools.

Key Comparison Metrics

- Token Consumption

The most significant weakness of MCP is context bloat. Every tool description consumes space in the model’s limited context window. In the flight-search tests, for example, the MCP implementation consumed nearly twice as many input tokens per request. This formula explains the elevated token usage:Servers × Tools per Server × Tokens per Tool = Context Consumed

In a high-traffic production environment, this means hitting context limits faster and a significantly higher monthly API cost.

- Latency

Response time has a direct impact on user experience. Because MCP requires the model to process a large number of tool definitions before addressing the user’s request, additional latency is introduced. In the tests, the non-MCP implementation was consistently 30–40% faster, returning flight results while the MCP version was still reasoning through a much larger context. - Reliability

An AI agent’s accuracy depends heavily on how clearly its instructions are presented, and with MCP, the system prompt can become noisy, cluttered with documentation for tools that are irrelevant to the request.The MCP version occasionally lost focus, hallucinating flight parameters or ignoring date constraints due to the surrounding tool descriptions, whereas the Function Calling version generally produced better responses, suggesting that a more concise context can improve accuracy and tool execution.

Conclusion: When Should MCP be Used?

Does this mean MCP is not worth using? Not at all. MCP remains a sensible choice for:

- Rapid prototyping with local tools: Integrated coding assistants (such as Cursor or Claude Desktop) allow direct integration with pre-built community MCP servers, enabling quick functionality testing with minimal coding.

- Standardisation and reusability: MCP can also be valuable in larger teams, where the same tool needs to be shared across multiple models.

However, for production environments, like the travel chatbot, the verdict is clear: Function Calling is the stronger option. It offers the level of control needed to keep costs lower, latency shorter, and responses more accurate. Whilst MCP can simplify integration work during development, the token overhead is still too high for many consumer-facing products.

If you are evaluating how to connect LLMs to external systems, the choice of integration approach matters as much as the model itself. ClearPeaks helps organisations to assess these factors in practice, from prototyping agent workflows to designing architectures that balance flexibility, cost, latency, and reliability. Get in touch with our experts to identify the right integration method for your use case and turn promising AI concepts into efficient, production-ready solutions.